LLM Explorer Blog

2024-04-29

Microsoft recently introduced their new Phi-3 LLMs, which quickly outperformed the Llama 3 models despite their release less than a week prior. The Phi-3 models, particularly the Phi-3-mini, have demonstrated remarkable efficiency. Despite having only 3.8 billion parameters — less than half...

2024-04-27

There are many language models available today. But how do you know which one is right for you? Trying out a model can be time-consuming and frustrating. The good news is that there are free online playgrounds and tools that let you test language models without installing them. Let's explore the...

2024-04-23

Last week, the AI community was buzzing with the launch of Llama 3, available in 8B and 70B sizes. These models have outperformed many open-source chat models on standard benchmarks.

We also joined in on the LLM excitement 😊 with our post "Llama3 License Explained," which covers the license's...

2024-04-20

In this post, we've compiled a summary of both proprietary and open-source models that are provided as a service. However, this list does not encompass the full range of available hosting providers. You'll find numerous GPU and serverless LLM hostings listed in our directory. For more details,...

2024-04-18

The Meta Llama 3 Community License Agreement seems quite liberal at first glance, offering a breath of fresh air compared to traditional open-source and Creative Commons licenses. But to truly understand its permissiveness, we need to dive into the specifics of what you can and cannot do under this...

2024-04-18

Direct Preference Optimization (DPO) is fundamentally a streamlined approach for fine-tuning substantial language models such as Mixtral 8x7b, Llama2, and even GPT4. It’s useful because it cuts down on the complexity and resources needed compared to traditional methods. It makes the process...

2024-04-16

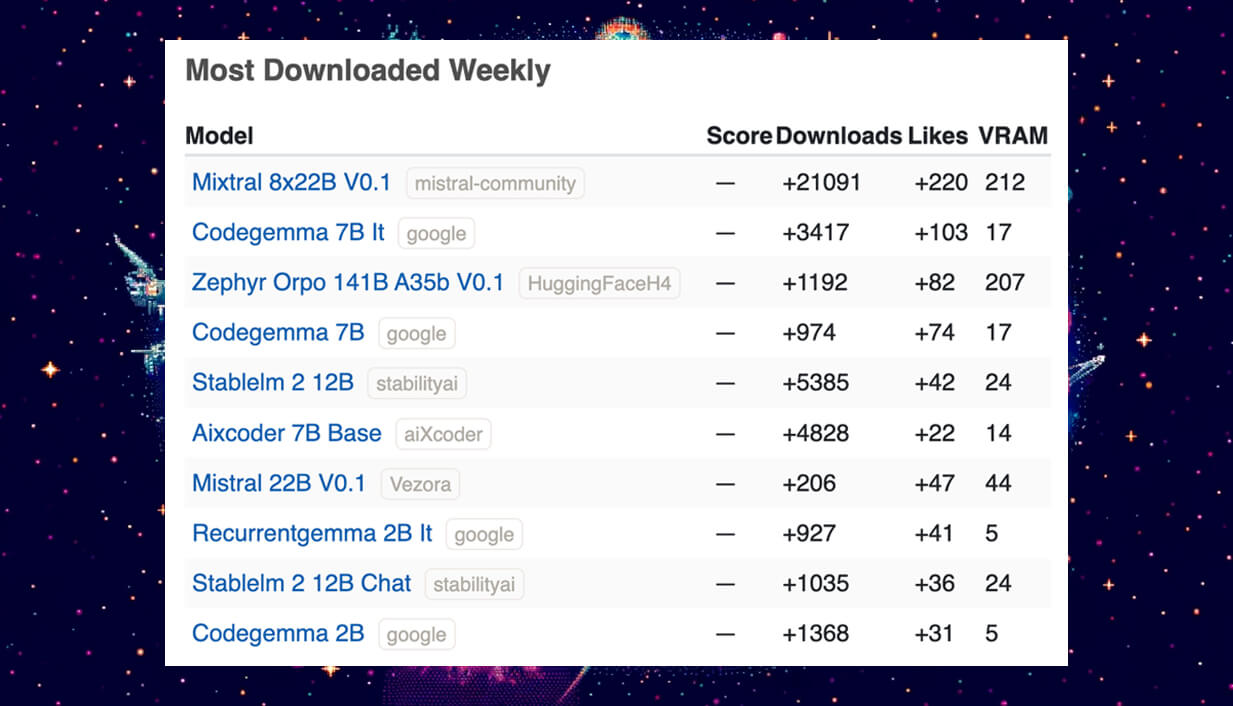

Welcome back to our ongoing series where we spotlight the most trending Large and Small Language Models (LLMs) shaping the current AI landscape. As we enter week #16 of 2024, let’s dive into the roundup of new LLMs that have captured the AI community’s attention. This week, we see a...

2024-04-15

The growing artificial intelligence (AI) industry has significantly changed how we interact with data. A key component of this progress is the development of Large Language Models (LLMs), which are capable of generating text that resembles human writing. However, using these models effectively and...

2024-04-10

LLMs are valuable for coding, helping to generate and discuss code, making it easier for beginners to advance their projects, and simplifying the start of new tasks. For experienced specialists, they serve as an advanced tool, enhancing code optimization and providing innovative solutions to...

2024-04-09

Welcome back to our ongoing series where we spotlight the Large and Small Language Models that are defining the current landscape of artificial intelligence. As of April 9, 2024, we're excited to bring you this week's roundup of the LLMs that have stood out in the AI community. Our list is...

2024-04-08

Noticing an increase in queries for 'uncensored models,' we've responded by adding a new Leaderboard focused on evaluating uncensored general intelligence (UGI) to our LLM Leaderboards Catalog.

The UGI Leaderboard is hosted on Hugging Face Spaces. It assesses models on their ability to process and...

2024-04-05

The development of NSFW (Not Safe for Work) Large Language Models (LLMs) is shaping new possibilities in adult content creation and engagement. Recognizing adult content as a legitimate and natural aspect of human expression, the AI industry is moving towards creating tools that can cater to the...

2024-04-04

Last week, Jamba took the lead as the most trending model, beating all other Large Language Models (LLMs) in terms of downloads and likes on platforms like Hugging Face and LLM Explorer. Its popularity remains strong, holding the top spot. Curious about its success, we're taking a closer...

2024-04-02

Continuing with our weekly roundup, here's the latest on the AI models making waves in the community as of April 2, 2024. These models have captured widespread interest, as evidenced by their downloads and likes on platforms like Hugging Face and LLM Explorer. Let's dive into this week's standout...

2024-03-26

Following our previous week's roundup of Top-trending large language models (LLMs), here's the latest update on the AI models that have captured the community's attention from March 26, 2024. This week, we see new entries and significant updates, showcasing the dynamic and innovative landscape of...

2024-03-22

In this article, we'll take a closer look at LLM (Large Language Model) Leaderboards, a key tool for assessing the performance of LLMs for professional use, and discuss the challenges and potential solutions for maintaining their reliability.

LLM Leaderboards are simple yet powerful tools that...

2024-03-19

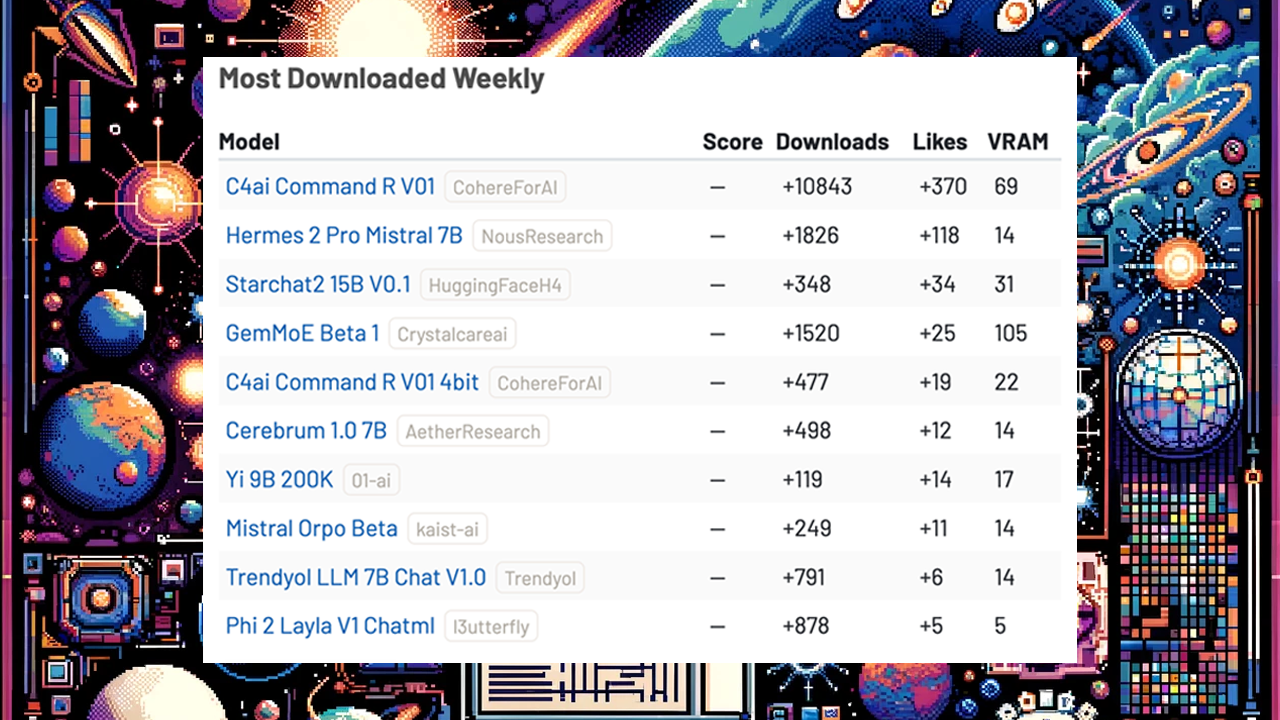

In this post, we'd like to share the list of top trending models that caught people's attention in the AI world over the last week. We ranked them by how many times they were downloaded and liked, based on information from Hugging Face and LLM Explorer.

1. C4ai Command-R V01, developed by Cohere...

2024-03-18

The emergence of open source large language models (LLMs) has changed the field of artificial intelligence (AI), particularly in natural language processing (NLP). These models are gaining popularity for their cost-effectiveness, high customizability, reduced vendor lock-in, transparent code,...

2024-03-17

In recent years, small language models have sparked considerable interest among AI professionals and enthusiasts alike. Marking a significant shift towards more accessible and adaptable generative AI technologies, SLMs have proven to be highly beneficial for both individuals and organizations....

2024-02-27

Uncensored models represent a unique class of artificial intelligence that operates without the traditional constraints imposed on most AI systems. Designed to generate/provide information with minimal restrictions, these models offer expansive capabilities for professionals aiming to utilize AI's...

2024-02-22

In a surprising turn of events that has captivated the AI community, a leak from Mistral AI, a Paris-based AI powerhouse, has brought to light an advanced Large Language Model known as "Miqu-1 70b". This development was confirmed by Arthur Mensch, the CEO of Mistral, through a humor-laced tweet,...

2024-02-20

Mamba represents a new approach in sequence modeling, crucial for understanding patterns in data sequences like language, audio, and more. It's designed as a linear-time sequence modeling method using selective state spaces, setting it apart from models like the Transformer...

2024-02-17

This week, the AI community witnessed the arrival of RWKV Eagle LLM v5, a groundbreaking development in machine learning architecture. Unlike its predecessors that rely on the attention mechanism, RWKV Eagle v5 employs a "Linear Transformer" design, integrating aspects of both RNN and...

Original data from HuggingFace, OpenCompass and various public git repos.

Release v2024042801